Best Presale Coin To Watch in 2026: Earth Version 2 (EV2) and Other Tokens Stand Out Heading Into 2026

The cryptocurrency market is heating up again as investors seek early opportunities ahead of the 2026 bull run. Presale tokens are gaining popularity because they often offer strong potential returns and unique real-world applications. These initiatives encompass a diverse range of areas, including gaming, artificial intelligence, and blockchain infrastructure.

This new wave of presales shows how fast innovation is moving into Web3 world. Some tokens are introducing smart utilities that could reshape digital ownership and online economies. With its compelling token model and immersive gaming concept, Earth Version 2 (EV2) has emerged as one of the rising stars in the blockchain space. Projects like Bitcoin Hyper, EcoYield, Maxi Doge, and SUBBD are also gaining popularity alongside it.

1. Earth Version 2 (EV2)

Earth Version 2 (EV2) is an open-world sci-fi looter shooter built for next-generation Web3 gamers. Players battle other players for valuable loot, explore alien-infested worlds, and gather rare equipment. In EV2, every combat suit has special flight mechanics, customizable weapons, and potent abilities that influence every fight.

EV2 runs on Avalanche’s C-Chain, ensuring fast transactions and scalability. The token sale is only held on the Ethereum network. With this strategy, EV2 benefits from the best of both worlds: Avalanche’s performance for gaming and Ethereum’s liquidity for investors.

The $EV2 token powers the entire ecosystem. Players use it to upgrade their weapons, trade NFTs, and stake. A total of 2.88 billion tokens exist, with 40% available during the presale. By accepting ETH, BTC, BNB, USDT and SOL, the project gives gamers and cryptocurrency enthusiasts worldwide access.

EV2 introduces a dynamic mode called Fracture, a 25-player survival match filled with chaos and strategy. Players collect glowing cubes to reveal a secret relic, then fight to survive as the hunted carrier. The tagline says it best: One Fracture. One Legend.

Its roadmap highlights a Q4 2025 presale launch, Q1 2026 partnerships, and a Q2 2026 full game release. EV2 provides one of the most cutting-edge blockchain gaming experiences by fusing cross-platform play, NFT integration, and deep storytelling. The project bridges traditional gaming with real token rewards, making it a standout presale ahead of 2026.

2. Bitcoin Hyper (HYPER)

Bitcoin Hyper is one of the anticipated presales targeting the Bitcoin ecosystem. The Solana Virtual Machine (SVM) framework is utilized to introduce a Layer 2 scaling solution. This design enables faster transactions and lower gas fees while maintaining compatibility with Bitcoin’s core network.

The project focuses on bridging Bitcoin with decentralized finance. It intends to provide tokenized assets, smart contracts, and staking in a safe setting. The presale priced HYPER around $0.0132 per token, drawing early infrastructure investors. As Bitcoin adoption grows, Bitcoin Hyper could play a major role in improving scalability and functionality within the Bitcoin ecosystem.

3. EcoYield (EYE)

EcoYield blends renewable energy, blockchain, and artificial intelligence. It builds modular data centers powered by clean energy sources like solar and wind. These facilities lease GPU power to blockchain projects and AI developers who require computational resources.

In this ecosystem, the EYE token serves as the primary utility. Data center partnerships allow users to earn energy credits, stake tokens, and share profits. EcoYield targets the growing real-world asset market, connecting blockchain innovation to physical infrastructure. Its emphasis on sustainability and token utility may draw in eco-aware investors looking for genuine financial gain in the cryptocurrency market.

4. Maxi Doge (MAXI)

Maxi Doge combines real utility with meme culture. Through NFT-based competitions, trading challenges, and staking pools, it emphasizes community involvement. The MAXI ecosystem also introduces deflationary token burns and decentralized governance for its growing user base.

Its presale raised more than $3.9 million, backed by viral marketing and active online communities. Weekly top traders are rewarded by the project, which also provides continuous liquidity incentives. Maxi Doge aims to be more than just a meme coin by introducing gamified token utilities. Its environmentally friendly design might keep users interested after the presale.

5. SUBBD (SUBBD)

SUBBD focuses on empowering creators in the digital economy. It offers blockchain and artificial intelligence (AI) tools to assist creators with royalties, payment automation, and performance analysis of their content. With this setup, independent creators have greater control over their output and revenue.

SUBBD token powers platform transactions and premium AI tools. Governance privileges and tiers of bonuses are granted to early investors. To increase adoption, the project intends to incorporate collaborations with media agencies and influencer networks. As the global creator economy continues to grow SUBBD’s decentralized approach could reshape how creators earn and collaborate online.

Final Take

Every presale project presents different earning schemes and tokenomics. With a supply of 2.88 billion, Earth Version 2 is in first place with 40% going toward player rewards and presale incentives. Its dual-currency setup — EV2 tokens and Holocrons — balances gameplay and economy. EcoYield uses energy-backed profit sharing, while Bitcoin Hyper rewards network validators. Maxi Doge encourages participation through competitions and staking, whereas SUBBD links profits to the work of creators. Together, these models show how innovation, fairness, and active participation can enhance long-term value in Web3 ecosystems.

$EV2 Presale

Website: https://ev2.funtico.com/

Telegram: https://t.me/EV2_Official

X: https://x.com/EV2_Official

The post Best Presale Coin To Watch in 2026: Earth Version 2 (EV2) and Other Tokens Stand Out Heading Into 2026 appeared first on CryptoPotato.

You May Also Like

The Stunning ASEAN Winner Emerges As Manufacturing Shifts Accelerate

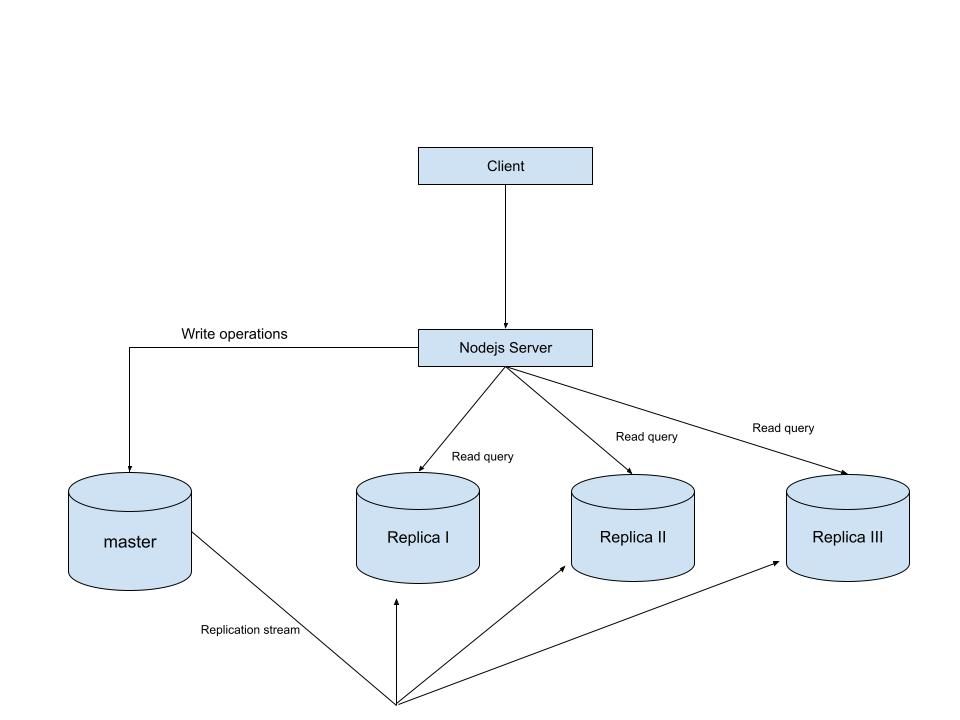

MySQL Single Leader Replication with Node.js and Docker

command: --server-id=1 --log-bin=ON The --server-id option gives each MySQL server in your replication setup its own name tag. Each one has to be unique and without it, replication won’t work at all. Another cool option not included here is binlog_format=ROW. This tells MySQL how to keep track of changes before passing them along to the replicas. By default, MySQL already uses row-based replication, but you can explicitly set it to ROW to be sure or switch it to STATEMENT if you’d rather log the actual SQL statements instead of row-by-row changes. \ Run our containers on docker Now, in the terminal, we can run the following command to spin up our database containers: docker-compose up -d \ Setting Up Our Master (Primary) Server To configure our master server, we would have to first access the running instance on docker using the following command docker exec -it mysql-master bash This command opens an interactive Bash shell inside the running Docker container named mysql-master, allowing us to run commands directly inside that container. \ Now that we’re inside the container, we can access the MySQL server and start running commands. type: mysql -uroot -p This will log you into MySQL as the root user. You’ll be prompted to enter the password you set in your docker-compose.yml file. \ Next, we need to create a special user that our replicas will use to connect to the master server and pull data. Inside the MySQL prompt, run the following commands: \ CREATE USER 'repl_user'@'%' IDENTIFIED BY 'replication_pass'; GRANT REPLICATION SLAVE ON . TO 'repl_user'@'%'; FLUSH PRIVILEGES; Here’s what’s happening: CREATE USER makes a new MySQL user called repl_user with the password replication_pass. GRANT REPLICATION SLAVE gives this user permission to act as a replication client. FLUSH PRIVILEGES tells MySQL to reload the user permissions so they take effect immediately. \ Time to Configure the Replica (Secondary) Servers a. First, let’s access the replica containers the same way we did with the master. Run this command in your terminal for each of the replica containers: \ docker exec -it <replica_container_name> bash mysql -uroot -p <replica_container_name> should be replace with the name of the replica container you are trying to setup b. Now it’s time to tell our replica where to get its data from. While inside the replica’s MySQL shell, run the following command to configure replication using the master’s details: CHANGE REPLICATION SOURCE TO SOURCE_HOST='mysql-master', SOURCE_USER='repl_user', SOURCE_PASSWORD='replication_pass', GET_SOURCE_PUBLIC_KEY=1; With the replication settings in place, let’s fire up the replica and get it syncing with the master. Still inside the MySQL shell on the replica, run: START REPLICA; This starts the replication process. To make sure everything is working, check the replica’s status with:

SHOW REPLICA STATUS\G; Look for Replica_IO_Running and Replica_SQL_Running — if both say Yes, congratulations! 🎉 Your replica is now successfully connected to the master and replicating data in real time.

Testing Our Replication Setup from the Node.js App Now that our replication is successfully set up, we can configure our Node.js server to observe the real-time effect of data being replicated from the master server to the replica server whenever we write to it. We start by installing the following dependencies:

npm i express mysql2 sequelize \ Now create a folder called src in the root directory and add the following files inside that folder connection.js, index.js and model.js. Our current directory should look like this We can now set up our connections to our master and replica server in the connection.js file as shown below

const Sequelize = require("sequelize"); const sequelize = new Sequelize({ dialect: "mysql", replication: { write: { host: "127.0.0.1", username: "root", password: "master", database: "replicaDb", }, read: [ { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3307 }, { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3308 }, { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3309 }, ], }, }); async function connectdb() { try { await sequelize.authenticate(); } catch (error) { console.error("❌ unable to connect to the follower database", error); } } connectdb(); module.exports = { sequelize, }; \ We can now create a User table in the model.js file

const {DataTypes} = require("sequelize"); const { sequelize } = require("./connection"); const User = sequelize.define("User", { name: { type: DataTypes.STRING, allowNull: false, }, email: { type: DataTypes.STRING, unique: true, allowNull: false, }, }); module.exports = User \ and finally in our index.js file we can start our server and listen for connections on port 3000. from the code sample below, all inserts or updates will be routed by sequelize to the master server. while all read queries will be routed to the read replicas.

const express = require("express"); const { sequelize } = require("./connection"); const User = require("./model"); const app = express(); app.use(express.json()); async function main() { await sequelize.sync({ alter: true }); app.get("/", (req, res) => { res.status(200).json({ message: "first step to setting server up", }); }); app.post("/user", async (req, res) => { const { email, name } = req.body; let newUser = await User.build({ name, email, }); // This INSERT will go to the write (master) connection newUser = newUser.save({ returning: false }); res.status(201).json({ message: "User successfully created", }); }); app.get("/user", async (req, res) => { // This SELECT query will go to one of the read replicas const users = await User.findAll(); res.status(200).json(users); }); app.listen(3000, () => { console.log("server has connected"); }); } main(); When you make a POST request to the /users endpoint, take a moment to check both the master and replica servers to observe how data is replicated in real time. Right now, we are relying on Sequelize to automatically route requests, which works for development but isn’t robust enough for a production environment. In particular, if the master node goes down, Sequelize cannot automatically redirect requests to a newly elected leader. In the next part of this series, we’ll explore strategies to handle these challenges