Google DeepMind Uncovers Six Critical Attack Vectors Targeting AI Agents

Key Takeaways

- Google DeepMind identifies six distinct attack vectors threatening AI agent security

- Covert HTML commands can redirect AI agent behavior without visible detection

- Strategically crafted language manipulates AI agents into performing malicious operations

- Contaminated information sources compromise AI agent memory and decision-making

- Enterprise AI agents encounter escalating threats in interconnected digital ecosystems

A groundbreaking study from Google DeepMind has uncovered six distinct vulnerability pathways that enable attackers to compromise AI agents operating in digital environments. The research demonstrates how malicious actors can exploit web-based content, concealed directives, and corrupted information repositories to manipulate autonomous systems. These discoveries underscore mounting security challenges as organizations increasingly rely on AI agents for mission-critical operations throughout connected infrastructures.

Hidden Instructions and Persuasive Tactics Target Agent Decision-Making

The research team pinpointed content injection as a primary vulnerability affecting AI agents during web navigation. Malicious actors embed invisible directives within HTML markup or metadata structures that redirect agent behavior while remaining undetectable to human observers. This approach allows attackers to issue commands through concealed page components that AI systems interpret as legitimate instructions.

Semantic attacks represent another critical threat vector that leverages convincing language patterns instead of technical exploits. Threat actors construct web content using authoritative presentation styles and logical narrative frameworks designed to circumvent protective measures. These sophisticated psychological techniques cause AI agents to classify dangerous directives as authentic operational requests.

Both exploitation methods capitalize on fundamental mechanisms governing how AI agents evaluate and act upon digital information during autonomous operations. The findings reveal that carefully engineered prompts can systematically alter reasoning processes in ways that evade detection. Adversaries successfully redirect AI agent workflows toward harmful objectives without activating security protocols.

Data Poisoning and Action Hijacking Create Persistent Threats

DeepMind researchers discovered that threat actors can compromise the knowledge repositories that AI agents consult for information retrieval and context building. Through strategic insertion of falsified content into authoritative data sources, attackers establish lasting influence over system outputs and behavioral patterns. This contamination causes AI agents to integrate fabricated information into their operational knowledge base, treating manufactured data as validated facts.

Direct behavioral manipulation represents an immediate danger to AI agents performing standard browsing activities. Adversaries embed jailbreak sequences and override commands that neutralize built-in limitations and activate prohibited functions. AI agents configured with elevated system privileges become particularly vulnerable, potentially exposing confidential information or executing unauthorized data transfers to external endpoints.

The study emphasizes that vulnerability levels intensify proportionally with the autonomy granted to AI agents and their integration depth within organizational systems. Malicious actors exploit standard operational procedures to inject harmful instructions into everyday workflows. Risk exposure multiplies significantly when AI agents interface with third-party tools, application programming interfaces, and external service ecosystems.

Coordinated Attacks and Human Oversight Gaps Magnify Consequences

Researchers caution that systemic vulnerabilities can simultaneously compromise multiple AI agents operating across distributed networks. Synchronized manipulation campaigns may produce chain-reaction failures comparable to algorithmic trading disruptions that cascade through financial markets. AI agents functioning within shared computational environments create conditions where individual compromises propagate rapidly across organizational boundaries.

Human verification processes embedded within AI agent workflows contain exploitable weaknesses that adversaries systematically target. Attackers engineer outputs with superficial credibility markers that successfully navigate human review checkpoints. This enables AI agents to execute harmful operations after obtaining human authorization based on deceptive presentations.

The research situates these security findings within the accelerating trend of AI integration across commercial sectors. Modern AI agents routinely manage communications, procurement decisions, and cross-system coordination through fully automated mechanisms. Establishing robust security frameworks for operational environments has become equally vital as advancing core model architectures.

The DeepMind team advocates implementing adversarial training protocols, comprehensive input validation systems, and continuous behavioral monitoring to mitigate identified risks. Their analysis highlights the current fragmented state of defensive measures and absence of unified industry security standards. As AI agents assume expanding responsibilities throughout enterprise operations, developing coordinated protection strategies becomes increasingly imperative.

The post Google DeepMind Uncovers Six Critical Attack Vectors Targeting AI Agents appeared first on Blockonomi.

Ayrıca Şunları da Beğenebilirsiniz

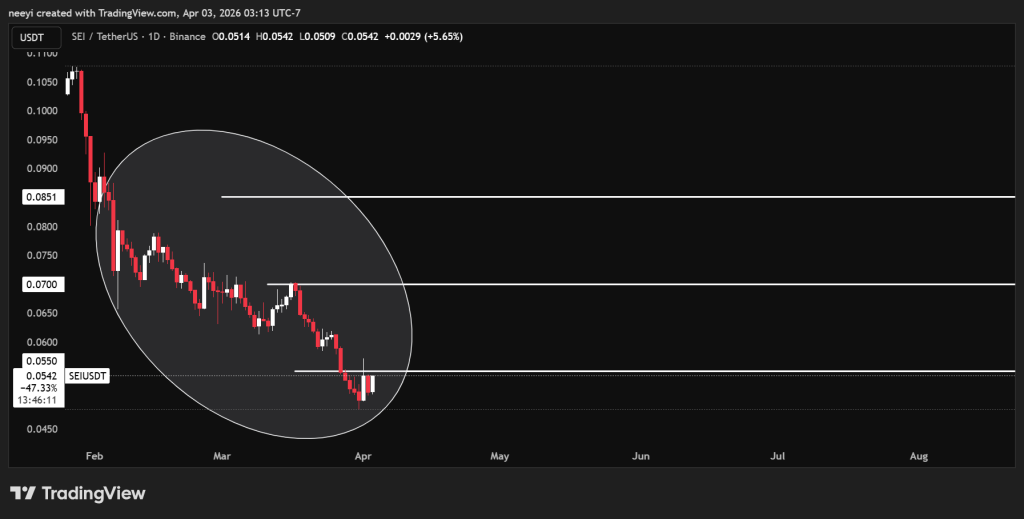

SEI Price Continues Lower: Can the Giga Upgrade Trigger a Full Reversal?

Bitcoin ETFs Surge with 20,685 BTC Inflows, Marking Strongest Week